How Reelsy AI Content Studio Turns One Idea Into TikTok-Ready Videos with GPT Image 2 + Seedance

Most short-form teams do not have an idea problem.

They have a production shape problem.

Someone has a product screenshot, a customer story, a blog post, a founder note, a rough campaign angle, or a viral format they want to test. Then the workflow breaks into too many small decisions:

- What is the hook?

- What should the first frame show?

- How many beats should the video have?

- Which visual style can hold the whole piece together?

- How do we turn a static concept into motion without losing the product, face, UI, or story?

- How do we make enough variations to test without hiring another editor?

That is the job of Reelsy AI Content Studio.

It is not a generic AI video playground. It is a production workflow for turning one input into a ready-to-test short video. The important part is the model pairing: GPT Image 2 creates the motion-ready visual board. Seedance turns that board into a short video.

The old way is to ask a video model to invent everything at once. That sounds convenient, but it creates weak outputs because too many decisions are compressed into one prompt. The better workflow separates the problem into two clean stages:

- Design the reference board with GPT Image 2

- Animate that board with Seedance

That one split removes a lot of failure modes.

Why This Workflow Exists

TikTok, Instagram Reels, and YouTube Shorts all reward fast testing. A team does not need one perfect video. It needs a steady stream of sharp, testable pieces with clear hooks, stable visuals, and enough variation to learn what the audience actually responds to.

The hard part is that high-volume short-form production usually collapses into chaos.

If every video starts from a blank prompt, the result is unpredictable. If every video goes through a full manual edit, the workflow is too slow. If everything is forced into rigid templates, the output starts to feel recycled.

Content Studio is built around a more practical unit:

Input -> Angle -> Motion-Ready Board -> Seedance Video -> Variants

That data structure matters. It gives each stage one job.

The input carries the raw material. The angle decides what the video is trying to say. The board locks the visual timeline. Seedance adds motion. Variants let the team test different hooks or story shapes without rebuilding the whole asset from zero.

That is the difference between generating random AI clips and running a content system.

The Real Product: A Motion-Ready Board

The most important object in Content Studio is not the final MP4.

It is the motion-ready board.

For the Image to 15s video workflow, Content Studio asks GPT Image 2 to create one generated image that contains a chronological visual timeline. Depending on the brief, that board can be:

- A 2x3 storyboard

- A 3x3 storyboard

- A 4x4 editorial board

- A motion sheet

- An app flow sheet

- A commercial board

- A vertical sequence

This board is not decoration. It is the control surface for the video model.

The board tells Seedance what the subject looks like, how the scene progresses, which product or UI details must stay stable, how the camera should move, and what the final payoff should feel like. Instead of asking Seedance to guess a whole video from a paragraph, Reelsy gives it a visual plan.

That is good engineering taste: put the structure where the system can use it.

Why GPT Image 2 Is the First Step

Short-form video quality usually fails before motion begins.

If the first frame is weak, the video is already in trouble. If the product shape changes between shots, viewers feel it immediately. If the UI text is messy, the ad looks fake. If the character looks different across beats, the whole piece loses trust.

GPT Image 2 is useful in Content Studio because the image stage needs instruction-following, layout control, and visual consistency before anything moves.

In the Reelsy workflow, GPT Image 2 is not asked to produce random pretty stills. It is asked to produce a board with constraints:

- One generated image, not separate files

- Clear chronological reading order

- Stable subject, product, UI, character, outfit, background, lighting, and color palette

- Large readable panels, not tiny clutter

- Minimal labels only when they help the board

- No fake metrics, unsupported product claims, watermarks, or noisy text

That discipline matters. A short video is a sequence, so the still image has to behave like a sequence before it becomes motion.

Why Seedance Is the Second Step

Seedance is the motion layer.

Once the board exists, Seedance receives a much cleaner job: animate the reference board as a continuous short-form sequence. It can treat the panels as ordered beats rather than inventing the entire structure from scratch.

For grid boards, the video follows the panels left-to-right and top-to-bottom. For vertical sequences, it moves top-to-bottom. For app flow sheets, it moves screen-to-screen. For motion sheets, it follows the subject through the action sequence.

That gives Seedance three things video models need:

- Identity anchors: the same product, person, app screen, or visual style across the whole piece

- Motion path: a readable progression from one beat to the next

- Timing logic: a 15-second structure instead of a vague instruction to "make it dynamic"

This is why GPT Image 2 + Seedance is stronger than treating image generation and video generation as two unrelated tools. GPT Image 2 defines the board. Seedance executes the motion.

What Content Studio Can Start From

Content Studio is designed around common creator and marketing inputs, not model parameters.

A user can start with:

- A product image

- A website or product URL

- A screenshot

- A blog post or article

- Existing copy

- A creative brief

- Reference images

- A viral format or generated case from the library

The point is not to make users choose from a wall of model settings. The point is to ask for the business material they already have and turn it into a video structure.

For example:

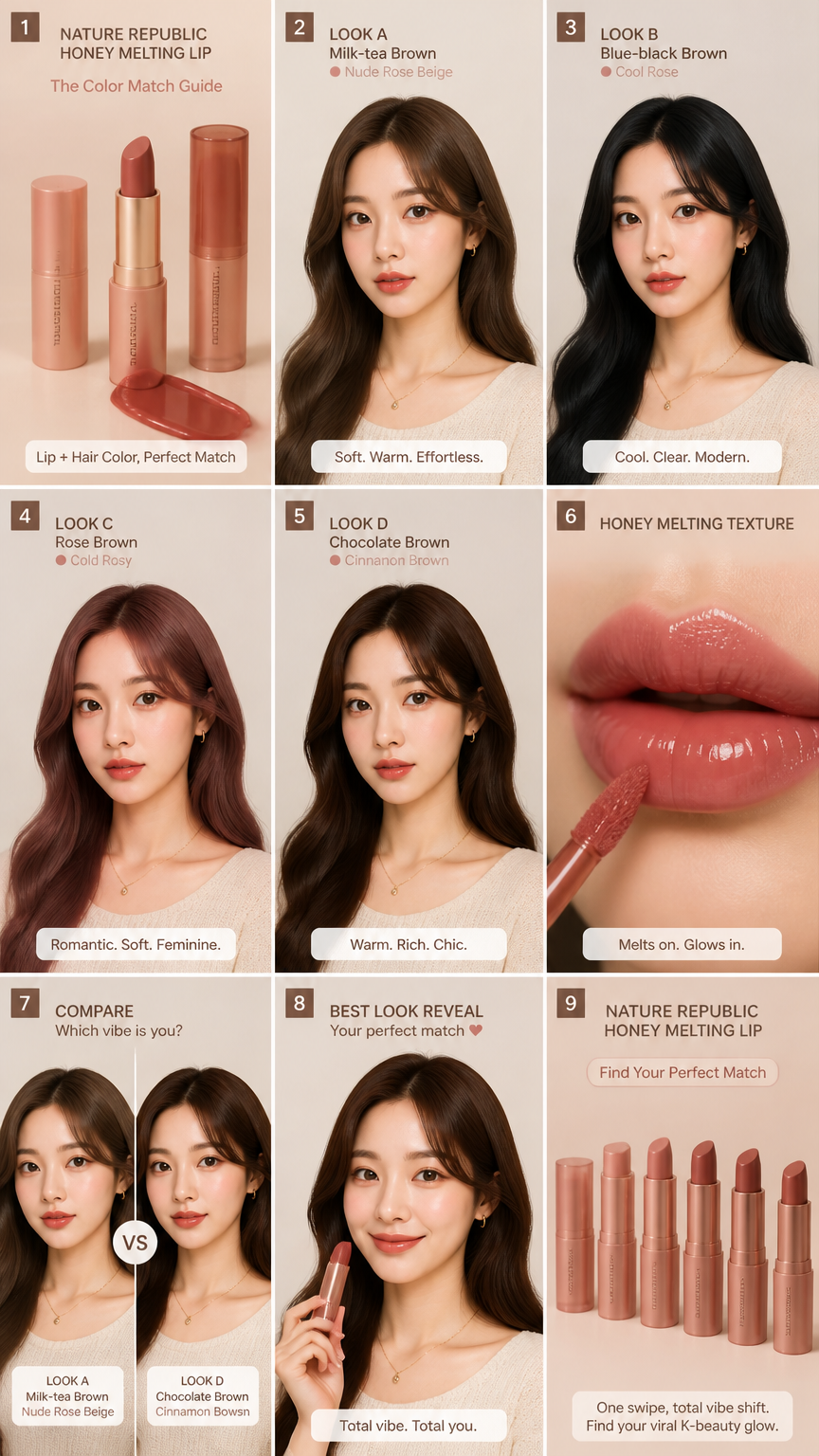

- A skincare brand can upload a product image and ask for a pain-solution ad.

- A SaaS founder can upload an app screenshot and ask for a feature walkthrough.

- A creator can paste an article and turn it into a hot-take short.

- An agency can choose a generated case from the Content Studio library and reuse the structure for a different client.

- A product marketer can write one sentence and get a 15-second motion board before spending video credits.

This is the right abstraction. Users care about the input and the outcome. The system should own the model choreography.

The Workflow Inside Reelsy

A typical Content Studio run looks like this.

1. Choose the Use Case

The user starts with a production goal, not a model.

Current workflows include:

Article to shortProduct adScreenshot to adScript to shortImage to 15s video

Each use case has different facts it is allowed to use. That matters because a product ad should not invent certifications, reviews, prices, or discounts. A screenshot ad should not guess unreadable UI text. An article-to-short video should preserve the article's meaning instead of rewriting it into a different argument.

This is not academic purity. It prevents bad output.

2. Generate the Angle and Script Plan

Content Studio turns the input into a concise creative direction:

- Optimized hook

- Campaign angle

- Narration beats

- Visual direction

- Scene or board plan

- Variant strategy

For article repurposing, the plan might choose a summary, hot take, or step-by-step structure. For product ads, it might choose pain-solution, feature-benefit, or social proof. For image-to-video, it chooses the board format Seedance can animate most reliably.

This planning stage keeps the video from becoming a loose pile of visuals.

3. Build the GPT Image 2 Board

For the Image to 15s video path, Reelsy asks GPT Image 2 for a single motion-ready board.

A good board prompt includes:

- The ratio, usually 9:16

- The intent, such as app demo, product ad, routine, story, or character animation

- The board layout, such as 2x3 storyboard or motion sheet

- The campaign angle and hook

- The narration beats

- The visual direction

- Any reference images and their roles

- Constraints for continuity, lighting, typography, and product shape

The result is a visual timeline that can be reviewed before video generation. This is important: users can confirm the board before spending credits on the motion stage.

4. Animate with Seedance

After the board is approved, Seedance turns it into a 15-second short.

The motion prompt tells Seedance to treat the board as a timeline, not a static collage. It should animate panels in reading order, preserve the subject and product details, and avoid inventing extra panels or unsupported claims.

This makes the final video more controllable than a one-shot text-to-video prompt.

5. Review, Regenerate, Export

The generated variant card supports the normal production actions:

- Preview

- Edit hook

- Replace cover

- Regenerate

- Export

The practical win is not only that one video gets made. The win is that a working structure can be reused. If one angle performs well, the team can regenerate more like it, change the hook, adjust the CTA, or run the same structure for a related audience segment.

Why Slideshows Still Matter, But Videos Convert the Workflow

Swipeable slideshows are powerful because they force active engagement. Every swipe is a small decision. That format is great for education, lists, routines, contrarian opinions, and story reveals.

But many teams do not want to stop at slideshows. They want the same structured thinking turned into a native short video with motion, pacing, sound, and export-ready timing.

That is where Content Studio changes the original slideshow idea.

Instead of producing six or seven static slides and stopping there, Reelsy uses the slideshow logic as a storyboard layer:

- Hook slide becomes the opening beat

- Middle slides become the motion path

- Proof or detail slides become close-ups and transitions

- CTA slide becomes the final payoff

Then Seedance turns that structure into a video.

So the strategy is not "slideshows versus videos." The better system is to use slideshow-style structure as the planning layer for video generation.

The Formats That Work Best in Content Studio

The most reliable formats are simple because simple formats give the model fewer ways to misunderstand the job.

Pain to Solution

This format works well for ecommerce, SaaS, apps, and service offers.

The board usually opens with the problem, moves into the cost of ignoring it, shows the product or workflow, and ends with a clean payoff. Seedance can animate it as a sequence of contrast, reveal, use, and result.

Listicle

Listicles are strong because the structure creates built-in progression.

For Content Studio, the important part is visual separation. Each panel should represent one item or one rank. The final beat should carry the strongest visual payoff, not just another caption.

Routine Breakdown

Routines work because they are naturally sequential.

A motion sheet or vertical sequence is often better than a generic collage here. The viewer should feel the action moving through steps: setup, first move, key detail, result, repeatable takeaway.

App Flow

For SaaS and mobile products, an app flow sheet keeps the UI stable.

Instead of asking a video model to guess product screens, Content Studio can lock a clean visual path: dashboard, action, result, export, CTA. Seedance then animates between screens with smoother continuity.

Product Demo

Product demos need object stability.

The board should show the product from consistent angles, preserve packaging and shape, and avoid fake review text. This is where the board matters most. If the product is stable in the board, Seedance has a stronger anchor for motion.

What Makes a Good Prompt in This System

A weak prompt says:

Make a viral video about this product.

A useful Content Studio prompt says:

Create a 15-second vertical product demo for a compact travel steamer. Show the problem of wrinkled clothes in a hotel room, the steamer heating up, a close-up pass over a shirt sleeve, the before-and-after result, and a final clean packing shot. Make it feel like a candid travel creator video, not a studio commercial.

The second prompt is better because it gives structure:

- Subject

- Context

- Action beats

- Visual tone

- Final payoff

- Negative constraint

GPT Image 2 can turn that into a board. Seedance can turn the board into motion. The system has something real to work with.

Why Variants Matter More Than One Perfect Output

Short-form growth is testing.

One video rarely proves anything. A team needs different hooks, different angles, and different emotional registers around the same offer. But making each variation from scratch is expensive.

Content Studio treats variants as structured changes, not random regeneration.

For the same input, the system can create:

- A controversial hook

- An educational list

- An emotional story

- A pain-solution ad

- A feature-benefit ad

- A social-proof angle

- A summary

- A hot take

- A step-by-step version

The trick is preserving the useful parts while changing the test variable. If the product, audience, and visual identity stay stable, the team can learn whether the hook or angle is driving performance.

That is how you scale testing without turning the workflow into a mess.

Where This Fits With the Rest of Reelsy

Content Studio is the broad creation entry point.

If you already have a viral reference and want to adapt its pacing, use Reelsy Viral Clone. If you need deeper model guidance for multimodal video creation, read the Seedance 2.0 Creation Guide. If your team is trying to publish every day without breaking the process, pair this workflow with batch content systems.

Content Studio sits between those needs:

- More structured than a blank video prompt

- More flexible than a fixed template

- Faster than manual storyboard and edit work

- Safer than asking one model to invent everything

It is the place where rough inputs become testable videos.

The Practical Production Rhythm

A simple production cycle can run like this:

- Collect 5 to 10 raw inputs: products, screenshots, articles, offer notes, or reference ideas.

- Use Content Studio to generate one motion-ready board for each.

- Approve the boards that have strong first-frame clarity.

- Send the best boards into Seedance for 15-second videos.

- Export the strongest outputs.

- Regenerate more variants from the winners.

That is a sane workflow because it puts review before the expensive motion stage.

Bad systems hide the important decision until the final video is done. Good systems expose the important decision early. In this case, the decision is simple: does the board have a strong enough visual sequence to become a short video?

If yes, animate it. If not, fix the board first.

The Bottom Line

The next useful content workflow is not "AI writes a script" or "AI makes a video."

That is too vague.

The useful workflow is:

One input -> GPT Image 2 motion-ready board -> Seedance short video -> testable variants

That is what Reelsy AI Content Studio is built to do.

It takes the structured thinking that makes slideshows work, upgrades the visual planning layer with GPT Image 2, and turns the result into motion with Seedance. The output is not just a clip. It is a repeatable content production system for creators, ecommerce teams, SaaS marketers, and agencies that need to test more ideas without turning production into chaos.

Start from the input you already have. Let Reelsy build the board. Approve the structure. Then animate it.

That is the clean path from idea to short video.